"Two Coders, One Campus, and the Soul of Artificial Intelligence"

Aaron Swartz and Sam Altman

Aaron Swartz wanted to free information for humanity. Sam Altman built a company that took it. Both passed through the same ecosystem. What does that tell us about who controls AI — and who should?

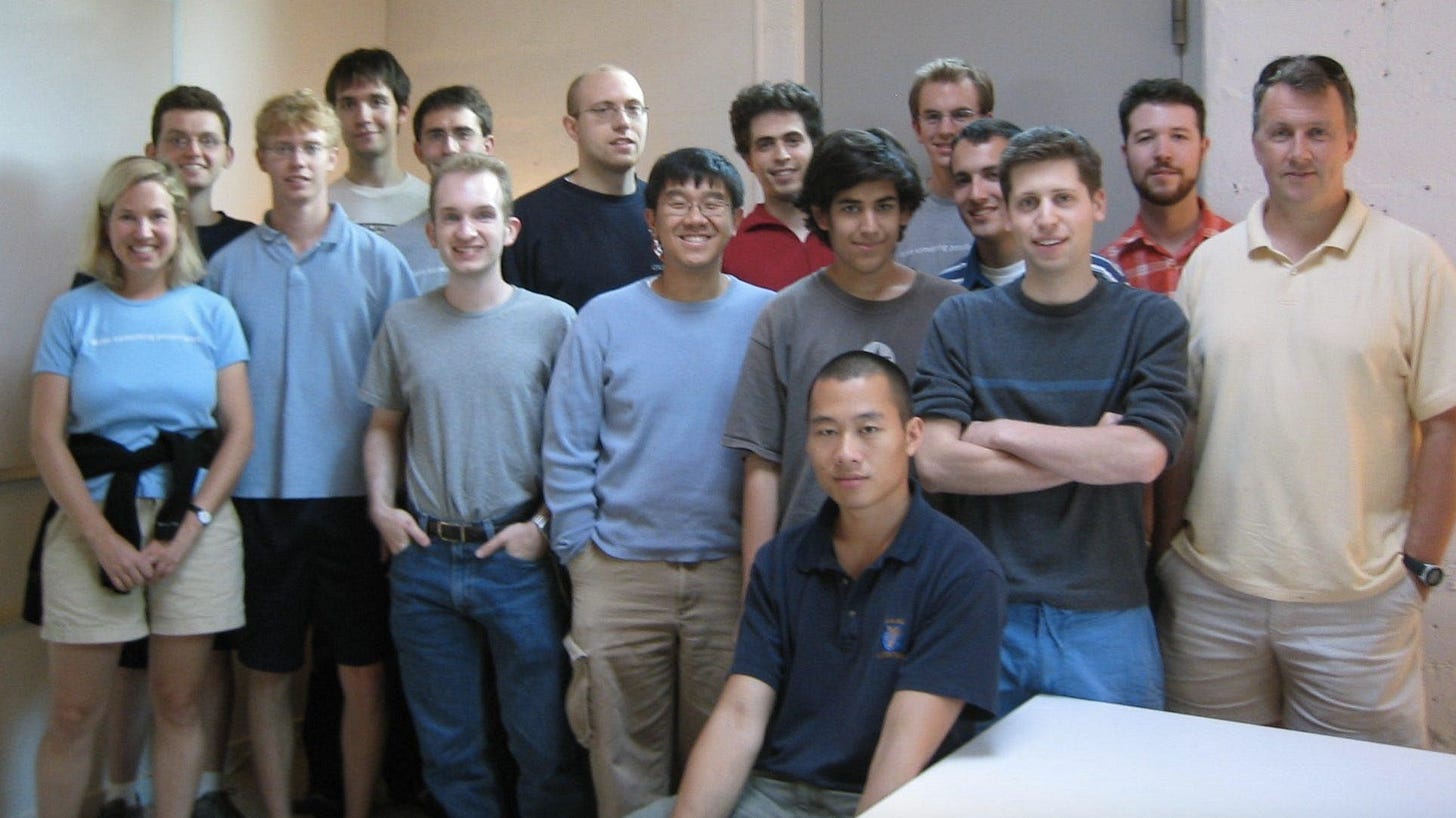

There is a photograph from the summer of 2005. Twelve young men and women are arranged in two loose rows outside a nondescript building in Mountain View, California. They are the inaugural cohort of Y Combinator, the startup incubator that Paul Graham had just launched with the conviction that the next generation of billion-dollar companies could be built by brilliant, hungry people, given a small amount of money and pointed in the right direction.

In the back row, second from the left, is Sam Altman. He is twenty years old, tousled, radiating the particular kind of ease that comes from already knowing you will end up somewhere important.

Standing next to him, almost touching his shoulder, is Aaron Swartz. He is eighteen. He co-wrote the RSS specification at fourteen. He helped build Creative Commons. He will go on to play a central role in defeating SOPA, the internet censorship bill, organizing one of the largest civic mobilizations in the history of the digital age. He will write a manifesto arguing that keeping information locked behind paywalls is a moral failure and that anyone who has the capacity to liberate it has a duty to do so. He will download 4.8 million academic journal articles from the JSTOR database using MIT’s network, with the apparent intention of making them freely available, and will be charged with thirteen federal felony counts carrying a potential sentence of 35 years in prison. He will die by suicide on January 11, 2013. He is twenty-six years old.

Two coders. One photograph. One ecosystem. And between their stories — the story of who controls artificial intelligence, who profits from it, who it will serve, and what values are embedded in the most consequential technology in human history.

I. The Optimization Machine

To understand where AI is going, and who is driving it, you first have to understand where its makers came from. And to understand that, you have to understand Stanford.

Stanford University sits at the geographic center of the ecosystem that produced Google, Yahoo, Hewlett-Packard, NVIDIA, and the venture capital industry that funded the rest of Silicon Valley. Its professors have worked at ground zero of the tech revolution for decades. Google Books Its computer science department has, at various points, housed or trained Larry Page, Sergey Brin, Peter Thiel (law school, adjacent ecosystem), and the researchers who produced the transformer architecture that underlies every major AI model in existence. It has also, less comfortably, educated Aaron Swartz — who attended briefly, left, and was perhaps too honest about what he saw there to stay.

In System Error, their 2021 book about Silicon Valley’s moral failures, Stanford professors Rob Reich, Mehran Sahami, and Jeremy Weinstein put their finger on what they call the core pathology of the tech industry: the optimization mindset. Big tech’s relentless focus on optimization is driving a future that reinforces discrimination, erodes privacy, displaces workers, and pollutes the information we get. This optimization mindset substitutes what companies care about for the values that we as a democratic society might choose to prioritize. Stanford Political Science

The optimization mindset is not, at its root, an ethical failure. It is a philosophical one. It treats every problem as having an objectively correct answer that can be discovered through the application of sufficient computational power, sufficient data, and sufficient scale. It is the mindset of the engineer who has been trained to maximize a function and has not been asked — has perhaps never been seriously asked — which function should be maximized, and by whom, and for whose benefit.

The rise of the Joshua Browders and the decline of the Aaron Swartzes encapsulate the challenge the world confronts with Silicon Valley.Devicedaily Joshua Browder built an app to help people fight parking tickets. He turned it into a company, raised venture capital, became famous in tech circles as an innovator. Aaron Swartz built tools for the public good and refused to treat information as a commodity. He became a target of the federal government. The ecosystem selected for one type and destroyed the other. That selection has consequences for AI.

II. What Aaron Swartz Understood

Aaron Swartz was not anti-technology. He was pro-democracy. The distinction matters enormously, because it is precisely the distinction that Silicon Valley has spent three decades collapsing.

He wrote, in his 2008 “Guerrilla Open Access Manifesto”: “Information is power. But like all power, there are those who want to keep it for themselves. But you need not — indeed morally you cannot — keep this privilege for yourselves. You have a duty to share it with the world.”

This is not the language of a hacker. It is the language of Jefferson. Of Paine. Of the Declaration of Independence itself. Swartz understood that the question of who controls information is the same question as the question of who controls democratic society — and that in the digital age, those questions had become identical.

Aaron Swartz and Sam Altman represent two archetypes of what Silicon Valley might value. Sam Altman embodied the ideals of the founder. Aaron, meanwhile, was a hacker in the classical sense of the word. Substack

The contrast is not merely biographical. It is philosophical. Altman’s vision of technology is fundamentally hierarchical: a small group of uniquely capable people build systems of extraordinary power, which they then deploy in ways they determine are beneficial, subject to accountability mechanisms they design and control. Swartz’s vision was fundamentally democratic: technology is a tool for the expansion of human capacity and human agency, subject to democratic accountability, with the benefits flowing to the people, not the builders.

These are not reconcilable visions. They produce different companies, different governance structures, different AI systems, and different futures.

The System Error authors note that when Swartz attended a Wikipedia conference in 2006, he was struck by something he had never seen at a technology conference: the primary concern was doing the most good for the world, with technology as the tool to help us get there. It was an incredible gust of fresh air, one that knocked me off my feet. Everand What had knocked him off his feet was the experience of being in a room of technologists whose first question was not “how do we maximize revenue?” but “how do we maximize benefit to humanity?” He found it remarkable. He found it remarkable because it was so rare.

It remains rare. It has not become less rare in the eighteen years since that conference. If anything, the AI era has made it rarer.

III. The Price of Downloading

On September 24, 2010, Aaron Swartz connected a laptop to MIT’s network and began systematically downloading academic journal articles from JSTOR.

He was caught. He was charged. JSTOR — the nominal victim — entered into a civil settlement with him. He had retained and was prepared to return the copies of all the articles that he had downloaded. JSTOR JSTOR did not want to prosecute. MIT wavered. The federal government did not.

At the time of his death, Swartz faced 13 federal felony charges relating to his downloading of more than 4 million academic journal papers from the online archive JSTOR, or about 80 percent of the JSTOR library. MIT News

At the time of his death, he was facing 13 felony charges and up to 50 years in prison. Prosecutors had accused him of using MIT’s network to download too many scholarly articles from an academic database called JSTOR. Swartz’s friends and family have said they believe he was driven to his death by a justice system that hounded him needlessly over an alleged crime with no real victims. Rolling Stone

The computer forensics investigator engaged by Swartz’s defense team later wrote that what Swartz did would better be described as “inconsiderate” — like checking out every book in the library needed for a particular research paper, or downloading a lot of files on shared wifi. Not heroic. Not criminal. Inconsiderate.

For inconsiderate, Aaron Swartz faced 35 years in prison and died at 26.

IV. The Price of Taking Everything

On December 27, 2023, The New York Times filed a lawsuit in federal district court in Manhattan.

According to the complaint filed by the Times, OpenAI should be on the hook for billions of dollars in damages over illegally copying and using the newspaper’s archive. The lawsuit also calls for the destruction of ChatGPT’s dataset. NPR

OpenAI’s models were not trained on one database. They were not trained on MIT’s network. They were trained on the internet — on billions of documents, articles, books, artworks, code repositories, academic papers, news investigations, personal correspondence, private medical forums, and creative works — all scraped without permission, without compensation, and without the knowledge of the people who created or inhabit the spaces from which the data was taken.

A federal judge rejected OpenAI’s request to toss out the copyright lawsuit, allowing the case’s main copyright infringement claims to go forward. NPR The case is ongoing. OpenAI — valued at $300 billion — argues fair use. The same legal framework that couldn’t prevent the prosecution of Aaron Swartz for downloading academic articles for free cannot, apparently, prevent a company worth $300 billion from taking everything.

The contrast is not subtle. It is not even ironic. It is structural.

Aaron Swartz downloaded 4.8 million academic articles from a nonprofit library with the intention of making them available for free to people who couldn’t afford access. He faced up to 35 years in federal prison and died before trial.

Sam Altman built a $300 billion company by ingesting, without permission or payment, the entire written output of human civilization — the journalism, the literature, the academic research, the creative work — and used it to build a product that now competes with the journalists, authors, academics, and artists whose work fed it. He sits across from the President of the United States at White House dinners and is described as a patriot.

The New Yorker reported that Altman, unprompted, told a board member who had pressed him on a pattern of deception: “I can’t change my personality.” The board member’s interpretation: “What it meant was ‘I have this trait where I lie to people, and I’m not going to stop.’”

The state’s response to one of these men was 13 felony charges. Its response to the other is a $500 billion infrastructure commitment.

This asymmetry is not an accident of enforcement. It is a structural feature of the legal and economic system that governs the digital world — a system designed, as this series has documented, by the same ideological movement that is now designing the governance framework for AI.

V. The Ecosystem and Its Products

To understand why the asymmetry exists, you have to understand the ecosystem that produced both men and chose between them.

Peter Thiel studied philosophy at Stanford, where he was captivated by the writings of Leo Strauss and René Girard. These thinkers shaped Thiel’s enduring worldview: that civilization is locked in cycles of envy and collapse, and only an enlightened few can see beyond the herd. Doctor Paradox Thiel founded the Stanford Review in 1987, which incubated the ideological formation of David Sacks — now the White House AI Czar — and a constellation of other figures who would go on to define Silicon Valley’s political economy. The PayPal Mafia: stacked full of Stanford Review alumni, including Ken Howery, David Sacks, and Eric Jackson, all former editors-in-chief. Stanford Politics

This is not incidental. The political philosophy of Silicon Valley’s founding generation — libertarian, dismissive of democratic governance, convinced that markets are superior to collective decision-making, committed to the idea that a small number of uniquely capable people should be entrusted with decisions that affect everyone — was formed in specific seminar rooms, around specific texts, by specific professors and editors and mentors. And it has not changed. It has simply been given more power.

The ideology that governs AI has a name, or rather several names. In 2023, Torres and his colleague Timnit Gebru coined the acronym TESCREAL to describe a constellation of ideologies — Transhumanism, Extropianism, Singularitarianism, Cosmism, Rationalism, Effective Altruism, Longtermism — that have become highly influential within Silicon Valley. Jacobin These ideologies share a common structure: they are concerned, in various formulations, with the very long run of human civilization, with the superintelligent AI systems that may govern it, and with ensuring that the “right” people — generally the technically sophisticated few who understand the stakes — are positioned to shape its direction. They are, at their core, philosophies of elite stewardship.

Elon Musk and OpenAI’s Sam Altman have signed open letters warning that AI could make humanity extinct — though they stand to benefit by arguing only their products can save us. hurriyetdailynews

This is, structurally, the same argument Altman has been making for a decade. If AI is dangerous, then only someone with the will to build it carefully can be trusted to build it at all — and he is that person. The safety argument and the dominance argument are identical in form. The man who warns that AI could destroy humanity and the man who wants to be the one to build it are, in Altman’s case, the same man. The New Yorker identified this as the “greatest pitchman” move of the generation: using apocalyptic rhetoric to explain why he should be the one to build the thing that might destroy us.

Aaron Swartz had no such pitch. He was not trying to capture the future. He was trying to open it.

VI. The Copyright Question as Civic Question

The New York Times lawsuit is not primarily a legal story. It is a civic story — one that reveals the fundamental asymmetry at the heart of the AI economy.

The legal dispute centers on fair use. OpenAI argues that training AI models on copyrighted material is transformative use — that feeding an article into a language model and producing a summary or a derivative output is categorically different from reproducing the article. Two federal judges in two separate cases have already independently confirmed what copyright law has long supported, finding in those cases that training AI models is highly transformative and protected by fair use. OpenAI

Perhaps. But the civic question is not whether the law permits this. The civic question is what it means that the entire written output of human civilization — the journalism, the literature, the scientific research, the cultural production — has been taken, without compensation, by a small number of companies, and used to build products that compete with the people who created that culture.

The Times describes this as “free-riding on The Times’s massive investment in its journalism by using it to build substitutive products without permission or payment.” This is accurate. But it is also a limited framing. The journalism is only the most legally legible part of what was taken. The rest — the personal writing, the creative work, the informal knowledge production of millions of ordinary people — has no standing to sue. It was simply consumed.

Aaron Swartz wanted to make academic research available to people who couldn’t afford it — people in developing countries, independent scholars, curious citizens. He wanted to take information that had been locked up behind paywalls and give it to the public. For this, the state deployed its full coercive power.

OpenAI took all of that information — plus everything else — and used it to build a product that requires a subscription, that is valued at $300 billion, and that is now negotiating government contracts for military and intelligence applications. For this, the state provided infrastructure commitments, regulatory forbearance, and a dinner at the White House.

The asymmetry is not about the legality of what each person did. It is about who the law is designed to protect, and who it is designed to subordinate.

VII. The Masters and Their Philosophy

Who are the people who will shape AI’s future? The question, posed seriously, produces a surprisingly small and ideologically coherent list.

We have simply accepted a technological future designed for us by technologists, the venture capitalists who fund them, and the politicians who give them free rein. Everand

The technologists who matter most at this moment are a specific cohort: the founders and senior executives of OpenAI, Anthropic, Google DeepMind, Meta AI, and xAI; the venture capitalists at Andreessen Horowitz, Sequoia, and SoftBank who fund them; and the government officials — primarily in the White House Office of Science and Technology Policy, and increasingly in the Defense Department — who set the regulatory framework.

What this group shares, despite their differences on specific policy questions, is a foundational assumption: that AI governance is primarily a technical problem, requiring technical expertise; that democratic deliberation is too slow, too uninformed, and too susceptible to populist panic to be trusted with decisions of this complexity; and that the people best positioned to make those decisions are — by happy coincidence — themselves.

This is not a conspiracy. It is a worldview, widely shared and sincerely held. It is also, as the Stanford System Error authors argue, the product of an educational formation that selected for optimization at the expense of democratic judgment.

Thiel has always seen himself less as a businessman and more as a philosopher of power. His ventures, from PayPal to Palantir, form a kind of metaphysical architecture of control. Doctor Paradox Thiel’s intellectual protégés — Sacks, Keith Rabois, others in the PayPal “Mafia” — now occupy positions of extraordinary influence in AI governance. Sacks, as AI Czar, produced the March 2026 White House framework that this series has documented: no new regulatory body, federal preemption of state safety laws, deference to markets. Ultimately critics say this fringe movement is holding far too much influence over public debates over the future of humanity. hurriyetdailynews

The alternative tradition — the one Aaron Swartz embodied — is not absent. It is present in the open-source AI movement, in the civic technologists who are trying to build accountability infrastructure, in the congressional offices of people like Ro Khanna who are articulating what democratic AI governance could look like. But it is under-resourced, under-institutionalized, and systematically excluded from the rooms where decisions are made.

VIII. The Document That Changed Everything

In 2008, Aaron Swartz sat down and wrote the “Guerrilla Open Access Manifesto.” It is a short document — fewer than 500 words — but it contains an argument that has not been answered, only evaded:

“We need to take information, wherever it is stored, make our copies and share them with the world. We need to take stuff that’s out of copyright and add it to the archive. We need to buy secret databases and put them on the Web. We need to download scientific journals and upload them to file sharing networks. We need to fight for Guerrilla Open Access.”

The document was used against him in his prosecution. It was cited as evidence of intent — as proof that he was not a curious researcher who had gotten carried away, but an ideological actor who had deliberately, willfully, chosen to violate the law in pursuit of a political vision.

He had. That was the point. He believed that the political vision — information as a public good, freely available to all — was worth the legal risk. He was twenty-one when he wrote it. He was twenty-six when the legal consequences of holding that belief killed him.

Twelve years after his death, the most powerful AI company in the world is making essentially the same argument about the training data it ingested without permission: that the public benefit of building superintelligent AI systems justifies the appropriation of copyrighted material. The argument is the same. The power differential is not.

When Aaron Swartz made the argument, he was a young man with a laptop, a network connection, and a moral conviction. The state responded with 13 felony counts.

When Sam Altman makes the argument, he is the CEO of a $300 billion company with a White House relationship, a Stargate infrastructure commitment, and a Pentagon contract. The state responds with a dinner invitation.

The difference is not the argument. It is who is making it.

IX. What the Two Stories Tell Us

The juxtaposition of Aaron Swartz and Sam Altman is not a morality tale. Neither man is simply a hero or a villain. Swartz was brilliant and idealistic and sometimes reckless. Altman is capable and driven and, as the New Yorker documented at length, capable of extraordinary deception in service of his ambitions. Both operated inside an ecosystem that rewarded certain behaviors and punished others — and the ecosystem’s choices reveal something important about the values that are being baked into AI.

The ecosystem chose Altman. It chose his model: private control, proprietary data, closed governance, safety rhetoric deployed instrumentally to justify dominance. It punished Swartz. It punished his model: public goods, open access, democratic accountability, information as a human right rather than a commodity.

That choice is now embedded in the architecture of the most powerful technology systems ever built.

The JSTOR articles Aaron Swartz tried to liberate are still behind a paywall. The journalistic archives, the literary output, the academic research, the personal correspondence that trained the AI systems that now answer questions about all of those things — that information is now inside a system worth $300 billion, accessible to those who can afford the subscription, deployed for purposes determined by those who control the company, subject to governance frameworks designed by the people who stand to benefit most from the absence of meaningful oversight.

This is not, as the tech industry’s defenders like to argue, the triumph of innovation over regulation. It is the triumph of a particular vision of who technology is for — and of a particular method for ensuring that the people who hold that vision are insulated from democratic accountability for the consequences of it.

Aaron Swartz’s “Guerrilla Open Access Manifesto” ends with a call to action: “It’s time to come into the light and, in the grand tradition of civil disobedience, declare our opposition to this private theft of public culture.”

He was describing academic paywalls. He would recognize, in the AI training data economy, a vastly larger version of the same dynamic: the privatization of the shared cultural inheritance of humanity, taken without consent, used to generate returns for a small number of investors and founders, and deployed in ways that no democratic deliberation has authorized.

The difference is that this time, there is no federal prosecutor. There is a presidential dinner.

Coda: A Question of Values

Aaron and Altman represent two archetypes of what Silicon Valley might value. Substack The ecosystem chose. But ecosystems are not natural forces. They are built by people, shaped by institutions, encoded in incentive structures and legal frameworks and regulatory choices that accumulate over decades into something that looks, but is not, inevitable.

The Stanford System Error authors are themselves professors at Stanford, which means they are, among other things, a system interrogating its own outputs. They are asking, from inside the machine, what the machine is producing and whether it should produce something different. This is not nothing. It is, in fact, precisely the kind of institutional self-examination that makes democratic renewal possible.

The question the AI moment poses is whether the ecosystem can be rebuilt — whether the selection pressures that killed Aaron Swartz and elevated Sam Altman can be changed before the values embedded in those pressures are locked permanently into systems that govern billions of lives.

This is not a question about technology. It is a question about democracy — about whether the people who bear the consequences of these systems have the authority and the capacity to shape them.

Aaron Swartz believed they did. He died defending that belief.

The question of who controls AI is, at its root, the same question. It has not been answered. It is only becoming more urgent.

“We need to take information, wherever it is stored, make our copies, and share them with the world.”

He was twenty-one when he wrote that. He was trying to build the world Jefferson described: one where the self-evident truth of human equality finds institutional expression in the free movement of knowledge. He failed, or was made to fail, in the specific mission. But the argument survives him.

The architecture of AI governance will determine, for a generation, whether that argument has any purchase in the world we are building. The answer depends on whether we are willing to ask, with the same clarity Swartz brought to it: who is this for?