Nobody Knows What Is Going to Happen

Introducing the Civic Curriculum — Eight Essays on AI, Work, and the Democratic Response

Toward a Social Contract for Citizen Thriving

The People’s Commission on Technology and the American Future

That sentence should appear far more often in the public conversation about artificial intelligence than it does. The technology companies building these systems project a transformation so profound that they are spending more than $660 billion on infrastructure in a single year — more than the GDP of Sweden, more than the United States spent building the entire interstate highway system adjusted for inflation, more than any private capital expenditure on any technology in human history. The economists projecting AI’s workforce impact disagree with each other by an order of magnitude: MIT’s Daron Acemoglu, the 2024 Nobel laureate in economics, projects AI will automate roughly five percent of tasks and add about one percent to GDP over the next decade; Goldman Sachs projects it will automate a quarter of all work tasks globally and boost productivity by nine percent. The venture capitalists funding the build-out are not sure either. One of AI’s most consequential investors calculated in 2024 that the gap between what AI companies need to earn and what they actually earn was $600 billion and growing — and concluded that it is entirely possible to believe “AI will change the world” and “the investment is unsustainable” at the same time.

The honest answer to “what will AI do to the economy?” is: we don’t know. We know more than we did two years ago. We know less than we will two years from now. The range of plausible outcomes is wider than either the evangelists or the skeptics want to acknowledge.

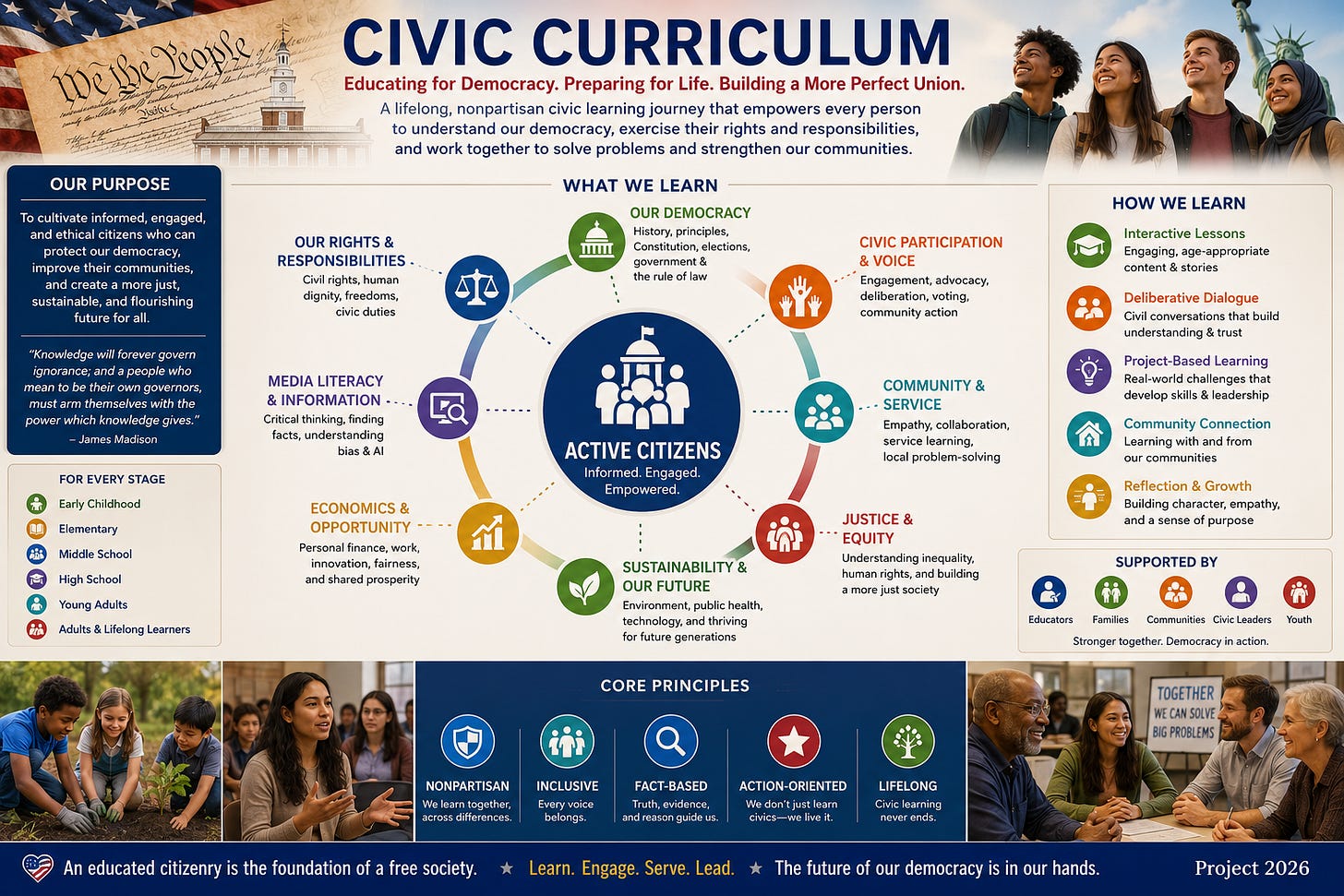

The Civic Curriculum was written because every scenario in that range requires a democratic response that does not yet exist — and because the scenario that demands the most urgent preparation is the one for which the least has been done.

Why This Curriculum Exists

Something is happening to work in America, and most of the people it is happening to have not been given the tools to understand it, the institutions to respond to it, or the democratic architecture to demand that their representatives do something about it.

Artificial intelligence — specifically, the large language model systems now deployed across every sector of the economy — is performing, at scale, at speed, and at marginal cost approaching zero, the cognitive tasks that have sustained the professional and knowledge-working classes for half a century. The billing specialists, the junior analysts, the legal associates, the financial administrators, the software developers in the early stages of their careers — these are not the subjects of a thought experiment about a distant future. They are the first cohort of a displacement that hundreds of billions of dollars of committed capital are organized to accelerate.

The public conversation about this transformation has produced awareness without architecture. The mainstream press documents the disruption with increasing urgency but without a civic frame that connects reporting to democratic action. The podcast ecosystem generates depth without democratic reach — substantive long-form analysis that rarely arrives at the workers and communities most directly affected. Social media makes the emotional experience of displacement visible at scale while providing no institutional infrastructure for converting fear into organized demand. And the companies building the technology are now acquiring the platforms that explain it — a concentration of informational and political influence that James Madison would have recognized immediately as the faction problem in its most consequential modern form.

This series exists because citizens cannot deliberate well about what they do not understand. They cannot hold elected officials accountable for policies they cannot evaluate. And they cannot recognize what is genuinely unprecedented about the AI moment if they do not understand the history of every prior moment that was said to be unprecedented.

The Four Scenarios You Need to Understand

Before we can build a democratic response, we need an honest map of what we might be responding to. The evidence supports at least four distinct scenarios. They are not equally likely. But they are all plausible — and a serious civic conversation must reckon with each.

Scenario One: The technology delivers. AI achieves the productivity gains its proponents project. New industries emerge. New categories of work are created. The optimists are right — not about the absence of disruption, but about the net outcome. The economic pie grows large enough that the displacement of tens of millions of workers could, in principle, be managed through redistribution, retraining, and the emergence of new employment.

Even in this scenario, the transition requires institutional architecture that does not currently exist. Productivity gains do not distribute themselves. The historical record is unambiguous: every prior technology revolution that increased aggregate wealth also concentrated that wealth in the hands of those who owned the technology, unless democratic institutions intervened. The Gilded Age was extraordinarily productive. It was also extraordinarily unequal — and it took a generation of progressive reform, labor organizing, and eventually the New Deal to build the institutions that converted productivity into broadly shared prosperity. The question under Scenario One is not whether AI creates value. It is whether anyone is building the institutions to ensure that the value is shared.

Scenario Two: The technology delivers partially. This is the scenario best supported by current enterprise data. Across multiple surveys — McKinsey, BCG, Accenture, PwC — roughly five to thirteen percent of firms report transformational returns from AI. The remaining eighty-seven to ninety-five percent are experimenting, piloting, or seeing no measurable impact. Software development, customer service, and specific knowledge-work applications show genuine productivity gains. Manufacturing, healthcare delivery, legal practice, and education show more modest results, complicated by reliability limitations and the stubborn gap between impressive demonstrations and dependable real-world deployment.

Under Scenario Two, the disruption is real but uneven. Some sectors experience significant displacement; others experience augmentation without major job loss. The aggregate economic impact is positive but modest. The investment cycle corrects rather than crashes. But this scenario is, in many ways, the hardest to prepare for: it generates displacement without generating the productivity abundance that would make generous transition programs economically painless. The workers displaced in the sectors where AI works well face real job loss, real identity disruption, and real community consequences — while the aggregate gains are too modest to fund a robust response. The policy challenge is acute precisely because the case for large-scale investment in displaced workers is weakest when the overall economy hasn’t grown enough to make it feel affordable.

Scenario Three: The investment bubble bursts. The gap between AI infrastructure spending and AI-generated revenue proves unsustainable. Companies that bet heavily on AI face write-downs, layoffs, and market corrections. The financial parallels to the dot-com bust of 2000–2001 become operative rather than rhetorical. The critical finding from historical precedent is stark: displacement does not reverse when the investment thesis fails. After the dot-com crash, Silicon Valley took approximately sixteen years to recover to its prior employment peak. A burst AI bubble would not restore the jobs eliminated during the expansion. It would compound the displacement with a contraction — workers displaced by AI automation would face a labor market in which the AI sector itself is shedding jobs and the economic confidence that might have funded transition programs has evaporated.

Scenario Four: The worst of both worlds. This is the scenario that two Nobel laureates — Daron Acemoglu and Joseph Stiglitz — have each independently identified as the most dangerous. AI proves capable enough to displace workers but not productive enough to generate the economic abundance that would offset that displacement. Acemoglu calls this “so-so automation”: technology that is good for corporate margins but marginal for overall productivity and devastating for the workers it replaces. Stiglitz has described a scenario in which the AI bubble bursts while AI is simultaneously displacing workers — a double hit for which, he said, “we do not have the macro or micro framework for managing that kind of displacement.”

A 2026 economic model by researchers at the University of Pennsylvania and Boston University — “The AI Layoff Trap” — demonstrates why this scenario is not merely possible but structurally likely. In competitive markets, rational firms will automate well past the point where doing so harms their own profits, because each firm captures the full cost savings of replacing workers with AI while bearing only a fraction of the demand destruction that displaced workers represent. The result is a Prisoner’s Dilemma: every firm automates because its competitors are automating, and the collective result is that they hollow out the consumer base they all depend on. The researchers tested the most commonly proposed solutions — wage adjustments, worker ownership, universal basic income, retraining, even direct firm negotiation — and found that none of them eliminates the structural incentive to over-automate.

Why the Democratic Response Is the Same Across All Four

This Curriculum does not claim to know which of these scenarios will unfold. It does claim — on the basis of the evidence assembled in the chapters that follow — that the democratic response required is broadly similar across all four, and that the response to the most severe scenarios has received the least preparation.

Under every scenario, workers are displaced. The scale varies — from Acemoglu’s five percent to Goldman Sachs’s estimate of three hundred million full-time-equivalent jobs globally — but the direction is consistent. Under every scenario, the displacement is concentrated among workers whose professional identities are organized around the cognitive tasks that large language models now perform: the analysts, the writers, the programmers, the legal researchers, the financial modelers, the customer service professionals. These are not the communities with the deepest experience of collective economic adversity. They are not the communities with the strongest mutual-aid traditions or the most robust institutional infrastructure for navigating displacement. They are, in many cases, the communities that were told explicitly and repeatedly — by the educational institutions and the professional culture that shaped them — that their cognitive skills were the assets that could not be automated.

Under every scenario, the existing policy infrastructure is inadequate. The federal AI legislative framework’s four pages of workforce recommendations. The Presidential Council of Advisors on Science and Technology composed of twelve technology executives and zero labor economists. The absence of mandatory displacement reporting, funded transition programs, portable benefits architecture, or democratic advisory infrastructure representing workers alongside industry. These gaps are not scenario-dependent. They exist under every version of the future.

And under every scenario, the human consequences of displacement extend beyond income loss to the dimensions that the conventional policy conversation has failed to name.

Work is not primarily an economic transaction. It is a health-creating institution — the most reliable daily source of what the public health researcher Aaron Antonovsky identified as the Sense of Coherence: the experience that the world is comprehensible, that its demands are manageable, and that what one does with one’s days is meaningful. When that coherence is destroyed faster than communities can rebuild it, the consequences are not merely economic. They are physiological, psychological, and civic.

The deaths of despair that followed American deindustrialization — more than 600,000 lives lost to suicide, overdose, and alcoholic liver disease — were not caused by income loss alone. They were caused by the simultaneous destruction of the full architecture of coherence in communities where no adequate replacement was ever built. That precedent governs this Curriculum’s sense of urgency — not because the AI transition will necessarily replicate those outcomes, but because the failure to prepare for the possibility that it could is a civic failure of the first order.

The Asymmetry That Justifies Urgency

If the optimists are right and the transition is managed well, the cost of having prepared too aggressively is modest: we will have built institutions and infrastructure that strengthen democratic life regardless of whether they are needed for displacement.

If the pessimists are right and the transition is not managed, the cost of having prepared too little is measured in human lives, in civic capacity, and in the democratic resilience of the republic.

That asymmetry is why we focus where we focus. It is also why the Civic Curriculum applies a single evaluative standard to every policy proposal, every corporate promise, and every elected official’s record — a standard we call the salutogenic standard, after Antonovsky’s science of what creates health rather than what produces disease.

The question is not: does this policy increase GDP? It is: does this policy restore the conditions under which the workers and communities affected by AI displacement can experience their lives as comprehensible, manageable, and meaningful?

A policy that replaces lost wages without rebuilding identity, community, and civic capacity is solving the wrong problem with the right resources. The Civic Curriculum is written to ensure that citizens can recognize the difference — and demand better.

What the Eight Chapters Cover

The Curriculum proceeds in eight installments, each written for the citizen rather than the specialist, each designed to build on the one before it.

Chapter 1: What Work Is For. The foundational essay. Drawing on Hannah Arendt, Sigmund Freud, and Aaron Antonovsky, it establishes the argument that animates everything that follows: work is not primarily an economic instrument. It is the primary arena in which most adults build identity, sustain community, and exercise the economic independence that democratic citizenship requires. Understanding what work actually does for human beings is the prerequisite for any honest reckoning with what its loss actually costs.

Chapter 2: Technology and the Transformation of Work. A historical account. From the spinning jenny through the assembly line through the personal computer, the essay traces the successive waves through which technology has reorganized human labor — each displacing work that seemed irreplaceably human, each producing concentrated suffering in the most exposed communities, each eventually generating institutional responses that arrived too late for the people who needed them most. It establishes why the present transition may represent a break from that familiar pattern in speed, breadth, and the unprecedented character of its primary target.

Chapter 3: The Large Language Model Revolution and Its Workforce Consequences. A plain-language account of the technology. What large language models actually are and actually do — neither the science fiction of artificial general intelligence nor the dismissive “autocomplete” framing — and the investment logic driving corporate AI deployment at a scale and pace that no amount of corporate social responsibility rhetoric will counteract.

Chapter 4: The Consumption Paradox. The structural contradiction. Workers are also consumers. An economy that captures its productivity gains almost entirely for owners while imposing its displacement costs on workers is not a more efficient economy — it is a less stable one. This chapter examines the historical precedent, from Henry Ford’s five-dollar day through Keynes’s paradox of thrift, and argues that AI-driven displacement of the professional middle class threatens the consumer demand foundation of the American economic model itself.

Chapter 5: The Policy Response: Urgency Without Architecture. An honest assessment of what has been done and what has been left undone. Four pages of workforce recommendations in the federal AI legislative framework. Bipartisan bills introduced and not enacted. Forty-five states with AI legislation and no funded national transition architecture. This chapter names the gap — and identifies the structural reasons, rooted in the organization of political power, that explain it without excusing it.

Chapter 6: Emerging Policy Frameworks and Proposed Responses. A rigorous, nonpartisan survey of the full range of serious proposals — from short-time compensation and wage insurance to universal basic income and worker ownership frameworks to David Shapiro’s Post-Labor Economics architecture. These disagreements are presented as honest disagreements, because the question of what democratic societies owe their citizens in an age of intelligent machines is precisely the kind of question that expertise alone cannot resolve. It requires citizens.

Chapter 7: How the Media Is Covering AI and Work — and What It Is Missing. A civic media assessment. What the mainstream press, the podcast ecosystem, and social media are providing — awareness, anxiety, coverage — and what they are not providing: civic tools, democratic accountability, and independent scrutiny of the industry most actively shaping the narrative about itself.

Chapter 8: The Salutogenic Standard. The capstone. The full arc of the preceding seven chapters brought to bear on a single question: what does an adequate response to the AI workforce challenge actually require, measured not against the standard of what is politically convenient but against what actually sustains human health? This essay converts the Curriculum’s diagnosis into a democratic demand — the standard against which the People’s Commission will evaluate every proposal, every commitment, and every elected official’s record.

How to Read This Series

These essays are written to be read before a forum and argued about during one. They assume that citizens are capable of engaging with serious evidence and complex argument — because that assumption is the prerequisite of democratic self-governance, and because everything that follows depends on it.

Each chapter stands on its own. They are also designed to build. The argument that runs through every one of them — that work is a health-creating institution, that its disruption is a civic emergency, and that the democratic response must meet the full scale of what is at stake — accumulates across eight installments into a case that no single essay can make alone.

We will publish them in sequence in this section of Moonshot Press. We invite you to read them, share them, disagree with them, and bring the arguments to the elected officials and civic institutions that must eventually be held accountable to them.

The People’s Commission on Technology and the American Future is convening its founding conference soon. The Citizens’ Declaration that emerges from that conference will be delivered to every candidate before the election. The Accountability Scorecard is already in circulation. The work has begun.

These eight essays are the intellectual foundation on which that work stands. They are the People’s Commission’s contribution to the proposition that an informed citizenry is not a luxury of democratic life. It is its precondition.

We begin now. Chapter 1 — What Work Is For — Coming Soon

“The care of human life and happiness, and not their destruction, is the first and only legitimate object of good government.”

— Thomas Jefferson

Moonshot Press is a publishing arm of the People’s Commission on Technology and the American Future. We are nonpartisan, constitutionally grounded, and committed to the proposition that the democratic response to AI displacement must be built by citizens — not handed down by experts.